- Published on

RAG vs CAG

- Authors

- Name

- Ashish Thanki

- @ashish__thanki

RAG vs. CAG: Understanding the Differences in Generative AI

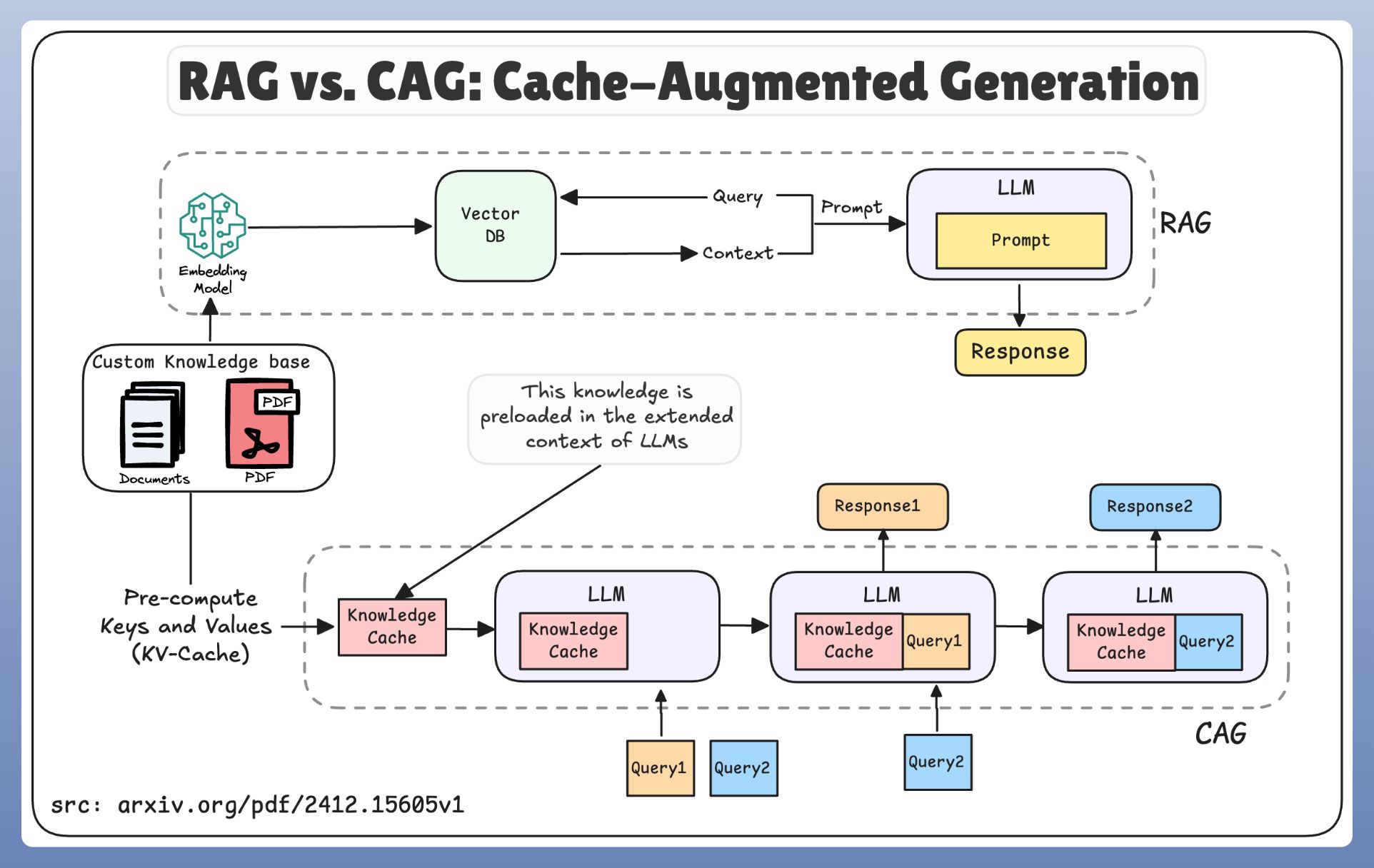

In the rapidly evolving landscape of generative AI, two prominent approaches stand out: Retrieval-Augmented Generation (RAG) and Conversational AI Generation (CAG). While both aim to generate human-quality text, they differ significantly in their methodologies and applications. However, they are not mutually exclusive and can be effectively combined.

Retrieval-Augmented Generation (RAG)

RAG models enhance the capabilities of large language models (LLMs) by incorporating an information retrieval step. The process typically involves:

- Retrieval: Given a user query, the model first retrieves relevant documents or passages from a knowledge base. This could involve techniques like:

- Vector Similarity Search: Embedding the query and documents into a vector space and finding the closest matches.

- Keyword-Based Search: Identifying documents containing relevant keywords.

- Generation: The LLM then uses the retrieved information, along with the original query, to generate a response. This allows the model to:

- Access up-to-date information beyond its training data.

- Provide more contextually relevant and accurate answers.

- Cite sources and improve the transparency of its responses.

Key Characteristics of RAG:

- Knowledge-Intensive: Relies on external knowledge sources.

- Factuality: Aims to provide accurate and verifiable information.

- Transparency: Can often cite sources for its claims.

- Suitable for: Question answering, information retrieval, and tasks requiring access to external knowledge.

Conversational AI Generation (CAG)

CAG focuses on generating coherent and engaging responses in a conversational context. While CAG models can be based on LLMs, the focus is on creating a natural and interactive dialogue. CAG does not necessarily incorporate an explicit external retrieval step.

Key Characteristics of CAG:

- Dialogue-Focused: Designed for interactive conversations.

- Coherence and Flow: Emphasizes the natural flow of conversation.

- Engagement: Aims to create engaging and interesting interactions.

- Suitable for: Chatbots, virtual assistants, and conversational interfaces.

RAG as a Foundation for Cache and Combining RAG and CAG

It's important to note that RAG can form the foundation of a caching mechanism. By storing frequently retrieved information and their associated responses, RAG can significantly reduce latency and computational cost for repeated queries. This cached information can then be used to enhance the performance of a CAG system.

Therefore, RAG and CAG can be effectively used together. For instance, a system can use RAG to retrieve relevant information and then leverage CAG to generate a conversational response based on that information. This allows for a system that is both informative and engaging.

Fine-Tuning Considerations

Both RAG and CAG can benefit from fine-tuning.

- RAG Fine-Tuning:

- Fine-tuning the retrieval component can improve the relevance of retrieved documents. This might involve adjusting the embedding model or the similarity metric.

- Fine-tuning the LLM on question-answering datasets can improve its ability to generate accurate responses based on retrieved information.

- CAG Fine-Tuning:

- Fine-tuning on conversational datasets can improve the model's ability to generate coherent and engaging dialogue.

- Reinforcement learning from human feedback (RLHF) can be used to further refine the model's responses and align them with human preferences.

Fine-tuning allows for the adaptation of the models to specific domains and tasks, significantly increasing performance.

Key Differences Summarized

| Feature | RAG | CAG |

|---|---|---|

| Knowledge Source | External knowledge base | Primarily relies on internal LLM knowledge |

| Focus | Factuality and information retrieval | Conversational flow and engagement |

| Retrieval Step | Explicit retrieval of relevant documents | Typically no explicit retrieval step |

| Transparency | Can often cite sources | May not provide sources |

| Cache Foundation | Can be used as a cache foundation | Less so. |

| Combination | Can be combined with CAG | Can be combined with RAG |

Conclusion

RAG and CAG represent distinct approaches to generative AI, each with its strengths and weaknesses. However, they are not mutually exclusive and can be effectively combined. RAG excels in tasks requiring access to external knowledge and factual accuracy, while CAG is better suited for creating engaging and natural conversational experiences. The choice between the two, or the decision to combine them, depends on the specific application and desired characteristics of the generated text. Fine tuning is a powerful tool to improve the performance of both RAG and CAG based systems.